Developers and TLS what could possibly go wrong

Developers are usually not friend of TLS, always running everything without TLS during development and disabling all protections to allow unencrypted traffic. This is a problem that can lead to problems in production. Lets examine how to use a local KeyCloak instance with a perfectly valid TLS certificate

The problem of not using TLS in developer machines

Lots of time ago, at the time Windows Communication Foundation was a thing, there were good automatic protections by Microsoft that prevent passing credentials in clear text over an unencrypted (non TLS) channel. I was amazed by the number of solution you can find in the internet that to solve the problem suggests to developer to create an unsecure channel that does not perform this check, allowing for clear text credential to be sent in a standard HTTP channel.

This will lead to the average developer to disable all checks that prevents unsecure behavior of the code, and the potential risk that those bypasses will be bring to production version of the software.

The problem is that developers constantly runs their code without TLS, and this can lead to disaster in production.

In the paste TLS certificates cost money but today everything is free so there is no excuses not to use TLS everywhere, even during development.

If developers are writing code using unsecured channel this will lead them to write code that can be used insecurely.

Even local intranet traffic should be encrypted, we MUST MOVE TO A WORLD WHERE EVERY TRAFFIC IS ENCRYPTED STARTING FROM DEVELOPER MACHINE WHERE THE CODE IS WRITTEN.

A bad solution, self signed certificates

I think that there is no need to explain why this is bad, I’ve seen in the past self signed certificate used in production and the company told people that should connect to the service to install that certificate as trusted root. Please, my eyes are bleeding. It is like telling someone: to use this car, you need to take this gallon of fuel in passenger seat and drive with a lighter on all the time, and be sure not to blew up everything.

Installing an external certificate as trusted root means that you are giving to the person that owns private key corresponding to that certificate to generate a certificate for every site that is considered valid for your machine. Basically you trust them to also intercept TLS traffic for your machine since now they can do Man in The Middle for TLS traffic.

self signed certificates are evil, please not use them.

A slightly better solution - mkcert

In this GitHub site you can find a nice utility to generate a test CA for local machine and use that CA to generate valid certificate for test sites.. This is slightly better than self signed certificates, because the developers are used to work with certificates that are more similar to a real CA. They generate a Pfx file, import and use as if that file was generated by a real trusted CA.

Thanks to mkcert no more self signed certificate

But the situation is not completely different by self signed certificates, CA private key called rootCA-key.pem can be used to create valid certificates for that machine. The author of the package clearly states that you need to take care of that file, but usually average developer reads first lines of the readme, and forgot to read the rest.

You can use this solution, but I strongly suggests you remove your rootCA-key.pem file from the standard location, store in some secure storage (like keepass) and restore the key when you need to generate another certificate.

Using the command mkcert -CAROOT you can find location of private key of the local CA, please store that file in a secure place.

Real best solution, certbot and letsencrypt

Anyone on these day owns a domain, and any company can register a domain for the only purpose of developing code, after all is a few bucks for a real game changer in how developer uses TLS. I’ve done an example using my blog domain, codewrecks.com, not the best practice but that domain only host my blog. For a real company you should register codewrecks-dev.com and NEVER EVER HOST ANYTHING public on that domain. Use Cloudflare as DNS for that domain ,that will simplify configuration of certbot.

First of all, install certbot on a linux Virtual Machine, I’ve followed the instructions to Install certbot on a debian virtual machine, in few minutes (except a trivial error of mine because I’ve used an old configuration file) you have a machine that can generate good certificates for that domain. These certificates are valid, no hack, no custom CA, just a plain valid certificate as you will have in production.

Now lets suppose you are using keycloak as Identity Provider, if you follow the vast majority of tutorials on the internet they made you start keycloak in container without TLS using port 8080. This lead developer to write code that allows to use an Identity Provider in a clear channel, this is a blasphemy. Once developers removed check in the code to prevent using OAuth2 code flow without TLS there is the risk that this can be abused in production. As an example in .NET code you should explicitly enable unencrypted traffic to your Identity Provider, Are you really sure that that bypass check is automatically removed when code goes in production?

Remember, safety check are put in libraries to prevent disaster to occur in production code, there is not a single reason to let developer disable those checks to avoid using real TLS certificates in all machine, even developers machine.

Developers can put control in the code that disable TLS only during development and let the check to kick in in production code, but let’s be honest, there is always the risk that you got that code in production.

Now, once I’have that virtual machine that uses certbot to automatically generates and refresh certificates for keycloak.codewrecks.com I can use to my advantage.

First step, use CloudFlare as DNS, it is free and it is fully supported by certbot, giving you the ability to generate certificates without opening port 80, just create an access token from CloudFlare profile for that domain and certbot works like a charm.

I now have a virtual machine that automatically renew a certificate for keycloak.codewrecks.com

Using from developer machine

Each developer, first time and whenever the certificate is expired, needs to retrieve new certificates from the virtual machine, using scp is the most common solution.

| |

Name of my certbot machine is certbotdev and certbot is executing as root, so I need to access that machine as root. In a real controlled enterprise environment you usually put in place these best practices

- You use a dev domain, like codewrecks-dev.com, and does not host anything on that domain (this will prevent a leak of a valid certificate to generate damage). In this example I’m using my primary domain, because I have only my blog on it and will never host a keycloak server on it.

- You NEVER EVER generate a certificate from the certbotdev machine that match a DNS record where you are hosting a valid site (if you lost the certificate that site will be compromised)

- You have only one or few people to have SSH key to perform SCP on that machine with root user, those people wrote a script that automatically get the certificates and place them on a shared folder where only developers can access.

Remember that, this is a dev certificate of a thing that will never be used in production so even if the certificate leak, there is small damage to the company. After that I configured cloudflare DNS to point keycloak.codewrecks.com to a local address.

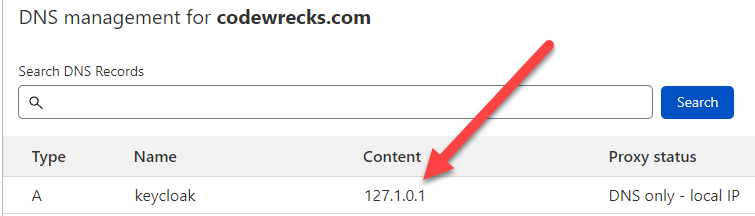

Figure 1: DNS record that points to local IP

Figure 1: DNS record that points to local IP

As you can see in my Public DNS in cloudflare I’m affirming that keycloak.codewrecks.com is a local address. This is a further protection of the certificate, if the certificate is leaked, it is not pointing anywhere so there is no risk that someone abuse that url with a valid certificate.

You should notice also that the IP is not 127.0.0.1 but 127.1.0.1. It is amazing how many developers ignore that the loopback address is the entire network 127.0.0.0/8. You can use millions of local IP and you can avoid using a different port for each application or microservice you develop. In this situation, even if KeyCloak server will be started in a local Docker Container I can bind the 8443 (the default TLS port in KeyCloak docker) only to IP 127.1.0.1 leaving all the other loopback IPs for other applications.

After this I create a docker-compose yml file for an instance of keycloak running with internal database but answers only in HTTPS with a valid TLS certificate issued by a valid CA. So every developer can simply grab the certificate files, copy to local machine and then simply starts keycloak in docker.

| |

As you can see there are some interesting parts. In line 9 I told docker to bind internal port 8443 to external port 443 only to ip 127.1.0.1. Since I’ve created a valid DNS public record for that server, all developers of the team will refer to the local keycloak server as https://keycloak.codewrecks.com, with a perfectly valid certificate, a solution that is much better than self signed certificates and sites running on https://localhost:8443 or some weird ports.

I’ve moved certificate and private keys from certbot machine to s:\secure folder (disk is bitlocker protected and s:\secure folder is encrypted with my current user), so I configured docker to place those two files in the location expected by the image, under /etc/x509/https. Basic KeyCloak image does not support password protected key file, so the key file is unprotected, (consult this post for a possible solution).

The result

Now a developer can simply get the certificate and the private key from certbot machine or from a protected location, place in s:\secure\ or parametrize the docker-compose file to let developer specify files location and just run docker-compose -f filename up.

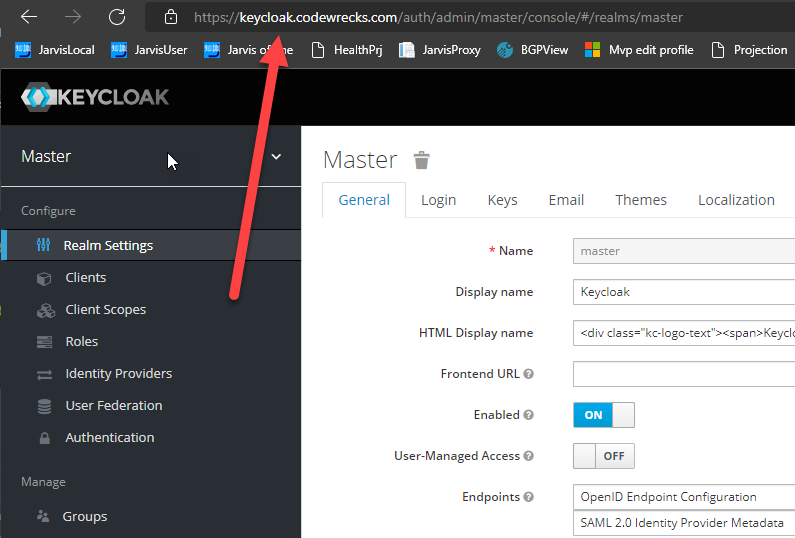

The result is that now every developer has a local KeyCloak instance running smoothly with a valid TLS certificate answering to a common name used by all developers.

Figure 2: Local KeyCloak server running in local docker but with a perfectly valid certificate

Figure 2: Local KeyCloak server running in local docker but with a perfectly valid certificate

Honestly, now that certificates are free, please teach your developers to use real valid certificates even for developing machines, the long advantage of this approach will pay big in security.

Gian Maria.